Moderation on Blacksky-Only Posts: A Community Proposal

Our team has explored several ways to make permissioned posts possible. Now we’re bringing the decision back to the community. Below are three approaches to moderation: a machine-learning system that automates moderation, a large-scale peer moderator program, or a hybrid model that combines both. We’re inviting your feedback to help decide how we should govern privacy together.

Filed under

UpdatesFrom our community and with our community is how we’ve always built. It was through this communication, our People’s Assembly, that one of the biggest community needs – private posts – surfaced.

We believe permissioned spaces are essential for community safety and for organizing together online, particularly at a time when abuses of privacy are becoming more common with growing political polarization. However, permissioned, Blacksky-only posts, means that Bluesky moderation would not have access, leaving moderation entirely to the Blacksky team.

Our team has explored several ways to make permissioned posts possible. Now we’re bringing the decision back to the community. Below are three approaches to moderation: a machine-learning system that automates moderation, a large-scale peer moderator program, or a hybrid model that combines both. We’re inviting your feedback to help decide how we should govern privacy together.

A machine learning approach

Before getting into the pros and cons, let us explain what we mean by machine learning. This is a tool that uses statistical patterns to predict whether any image or video content violates the Blacksky terms of service. That is it.

We are suggesting this route earnestly because it is what will help us scale the most efficiently and reduce exposure to damaging content by moderators and users. Our moderators already review incredibly high volumes of objectionable content daily including targeted harassment, misogynoir, and coordinated abuse. They cannot review everything, and repeated exposure to the most harmful content takes a real toll.

Countless studies have shown that the work content moderators do is closely linked with repeated trauma, and can lead to serious mental health issues. To us, no one should have to sort through piles of triggering or disturbing content. This is the work automation is meant to do. Automation, however, does not mean that we are removing human review or sending data elsewhere. Blacksky data is only for Blacksky: we will never give your data to third parties or use it to train generative AI models.

If we were to move forward, we would do an evaluation of current open source content moderation models and publish the results. This, coupled with human decision-making in our moderation tool, will use the ~100,000 photos and videos uploaded to Blacksky in the last 30 days to test the accuracy of the tool. This is the number of videos and posts that are uploaded monthly. This period lets us evaluate and customize off-the-shelf tools to the specific content and needs of our community. Below is a timeline of the roll-out of this tool, which includes two rounds of trial testing to modify the system’s confidence threshold before launch.

- March 2026: First round of trial testing > Internal accuracy review

- April 2026: Second round of testing and accuracy review > sharing any updates on the Blacksky page

- May 2026: Launch Blacksky-only posts with ML moderation

Within this testing period, we will do trial testing twice to ensure accuracy and share any updates and findings via our Blacksky Page before it is launched, so that the community is aware of the results of the testing. The current timeline slates Blacksky Only posts to go live in May of 2026.

After launch, we will closely monitor any feedback from the community via support@blacksky.app and internal data, which will result in a detailed “Transparency Report” for the Blacksky community quarterly (once Blacksky Only posts are launched in May). The first report will go live in August of 2026 and the second report will go live in December of 2026, etc.

Each report will consist of the following information:

- Accuracy report (we will provide the number of times a bot applied a label and the percentage of times that label was removed by human moderators)

- Quantitative Data Set (we will provide how many photos/images were accurately moderated with machine learning and checked by human moderators)

- Moderation report (we will include a numerical data set of what the machine learning labeled based on the categories via our labeler.)

Even with our reports and feedback from the community, we want to ensure that the community will continue to have the autonomy to appeal any label applied to their post or account. When an appeal is sent, it will be investigated and checked for accuracy against machine learning, and if it is incorrect, the label will be removed.

Peer moderation

An alternative to a machine learning model is an entirely human-run moderation system. It is a longer road that will require more coordination, effort, and also doesn’t limit exposure to damaging content. However, a structured peer moderation system would give the community the most control over the moderation process. This is how we would envision peer moderation:

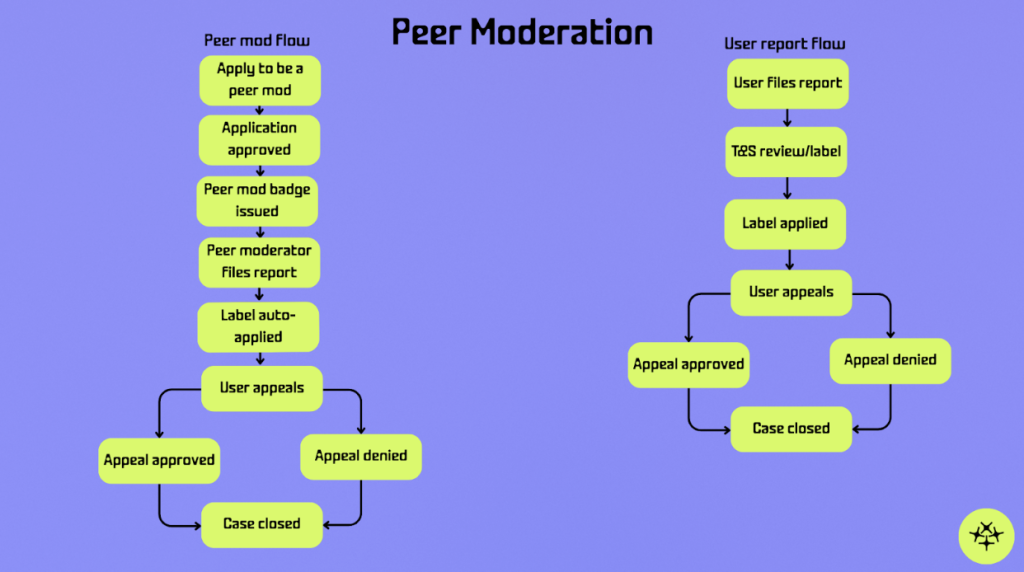

In this model, one can apply to be a peer moderator, and if accepted, peer moderators remain regular members of the community, but are given a badge and limited moderation capabilities through the reporting system. When regular users submit reports, those reports will continue to go through the standard moderation pipeline and will be reviewed by our Trust and Safety team.

But when a peer moderator submits a report, the reason they select is automatically applied as a label to the reported post or account. This allows trusted community members to help surface and label problematic content more quickly, while still allowing for review when needed. Peer moderators would operate entirely within blacksky.community and remain peers of other users, with the difference being their ability to apply labels through the reporting system.

After a moderation decision has been made, the user whose content was moderated has the opportunity to appeal which triggers a secondary review of the case. If the appeal is approved, the previously applied moderation label will be reversed. If the appeal is denied, the original moderation decision will remain in place. Once the appeal process has concluded, the case is formally closed.

Based on an estimated moderation volume of 100,000 images and videos per month, a peer moderation system would likely require a pool of roughly ten to fifteen moderators. This amount ensures coverage when moderators are unavailable, allows for second opinions on complex cases, and reduces the risk of decision fatigue.

If we move forward with peer moderation, an ambitious timeline that allows us to source, vet, train, and develop processes with volunteer moderators could look like:

- March 2026: Recruitment and introduction calls

- April 2026: Training and starting to moderate posts

- May 2026: Launch Blacksky-only posts with peer moderation team

In order to assure quality in moderation, a small percentage of random peer-mod labeled posts will be reviewed by our Trust and Safety team by the end of a week. This ensures that moderation stays reliable and puts a safeguard in place for potential mod abuse.

A hybrid approach

We want to make it clear that neither of these options are mutually exclusive. We are always people working with technology, and one won’t work without the other. If we implement a machine learning model, our moderators will work to make sure it is flagging what our team sees fit. If we implement a peer moderation system, we’ll need to develop the tools for everyday users to take part in moderating content. And lastly, we can take a hybrid approach – using both machine learning and impassioned volunteers to help make the system better and keep harmful content out of our community.

This hybrid approach is used by many social/media platforms today – Reddit, Wikipedia, etc – to keep community input integral to the use of these models, while not being wholly dependent on it and risking exposure of damaging content to the wider community.

Especially for marginalized communities online, the ability to set and enforce our own norms rather than relying on platforms with historically poor track records on racial content moderation is necessary democratic infrastructure and no matter which route we take – this is exactly what we’re building.

You decide

We have written this proposal to give you all insight into how we’re approaching privacy and moderation and get your input, as our community, on how to move forward. Follow this link to another People’s Assembly to cast your vote with us on how you want Blacksky-only posts moderated.